RETVec (Resilient and Efficient Text Vectorizer), a new multilingual text vectorizer introduced by Google, is designed to assist Gmail in identifying potentially detrimental content, including spam and malicious emails.

Gmail Launches RETVec to Fight Spam and Malware. The project’s GitHub description states, “RETVec has been trained to be resilient to character-level manipulations such as insertion, deletion, typos, homoglyphs, and LEET substitution, among others.”

“The RETVec model is trained on top of a novel character encoder which can encode all UTF-8 characters and words efficiently.”

Read more: A New Samsung Trademark Is Registered For Its Smart Glasses.

Prominent platforms like Gmail and YouTube depend on text classification models to identify phishing attacks, inappropriate remarks, and scams. However, it is not uncommon for threat actors to develop counter-strategies to circumvent these security measures.

The perpetrators have been documented employing adversarial text manipulation techniques, including invisible characters, keyword cramming, and homoglyphs.

RETVec, which operates out-of-the-box in more than a hundred languages, seeks to facilitate the development of server-side and on-device text classifiers that are both more robust and efficient but also more resilient and efficient.

Vectorization is a natural language processing (NLP) technique that converts vocabulary words or phrases into their corresponding numerical representations. This conversion facilitates subsequent analytical tasks, including named entity recognition, sentiment analysis, and text classification.

“Due to its novel architecture, RETVec works out-of-the-box on every language and all UTF-8 characters without the need for text preprocessing, making it the ideal candidate for on-device, web, and large-scale text classification deployments,” according to Google’s Elie Bursztein and Marina Zhang.

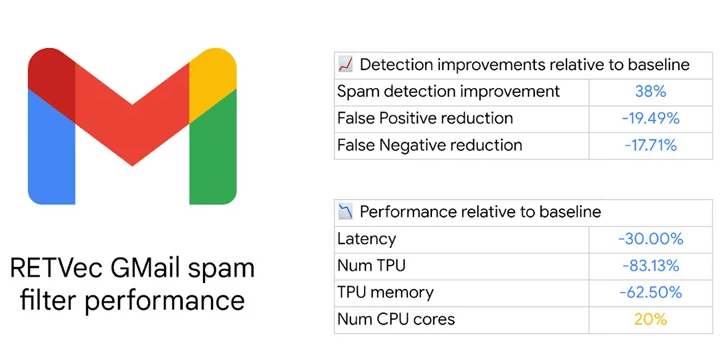

According to the technology behemoth, incorporating the vectorizer into Gmail increased spam detection by 38% and decreased false positives by 19.4% compared to the baseline. Additionally, the model’s Tensor Processing Unit (TPU) consumption was reduced by 83%.

Inference performance is increased in models trained with RETVec due to its compact representation. “Delay and computational costs are drastically reduced with smaller models, which is essential for on-device models and large-scale applications,” Bursztein and Zhang added.